Threat modeling is all about looking at potential threats from the attacker’s point of view so that those responsible for mounting a defense can prioritize their resources and prepare appropriate responses. Where are the high-value assets? What is the attack surface? What are the potential threats? What are the otherwise unnoticed attack vectors?

Military strategists have used the concept of threat trees and threat modeling since antiquity. In fact, the first extensive treaty on the subject may have been “The Art of War,” written by the great Chinese general Sun Tzu around 512 BC. For centuries after that, the evolution of threat modeling continued along the path of prioritizing military defensive readiness.

In the early 1960s, the advent of shared computing prompted a new form of threat – the cyber-attack. Ironically, one of the earliest documented attacks1 was perpetrated by an MIT academic, Dr. Allen Sherr, who was charged with analyzing the capabilities of the school’s new CTSS computer system. To gain more computing time for himself, Dr. Sherr accessed all the passwords for the CTSS users. Moreover, to cover his tracks, he broadly distributed the list throughout the school. Breaching username & password lists – as well as any other useful data – have been priorities for attackers ever since. Shortly after that, certain academic-types started systematically pushing the evolution of threat modeling for cybersecurity purposes.

Ironically, one of the earliest documented attacks1 was perpetrated by an MIT academic, Dr. Allen Sherr, who was charged with analyzing the capabilities of the school’s new CTSS computer system. To gain more computing time for himself, Dr. Sherr accessed all the passwords for the CTSS users. Moreover, to cover his tracks, he broadly distributed the list throughout the school. Breaching username & password lists – as well as any other useful data – have been priorities for attackers ever since. Shortly after that, certain academic-types started systematically pushing the evolution of threat modeling for cybersecurity purposes.

Theoretical Evolution of Threat Modeling for IT

Early in the evolution of threat modeling, the academics focused on the concept of architectural patterns introduced2 by Christopher Alexander in 1977. Eleven years later, Robert Barnard developed the first attacker profile. While he successfully applied the concept of attacker profiling to cybersecurity, his work was limited to only one attacker set.

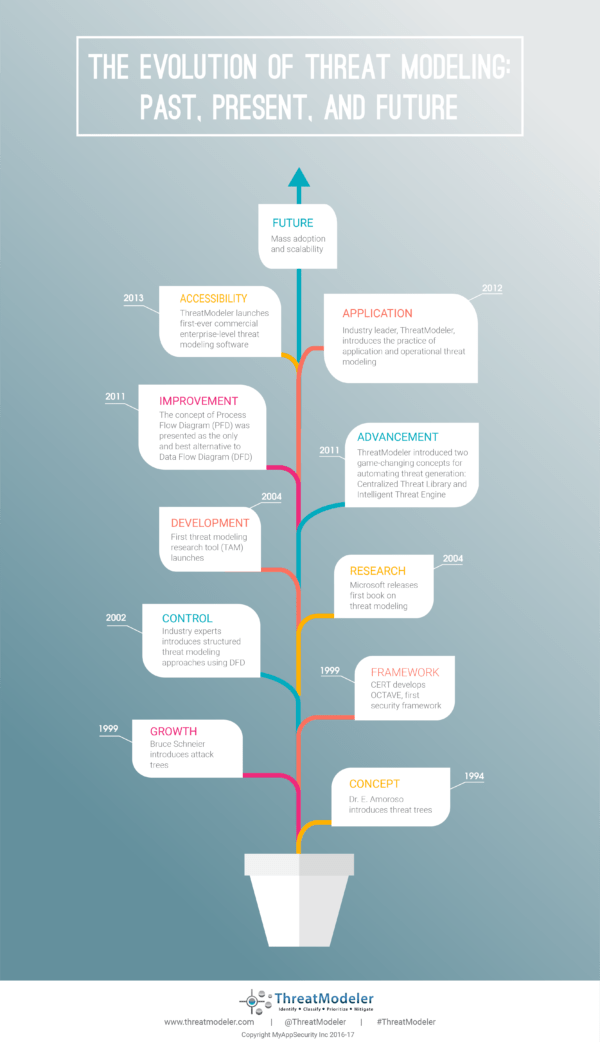

In 1994, Edward Amoroso published a book3, “Fundamentals of Computer Security Technology,” in which he described the concept of a “threat tree.” Threat trees, like other decision tree diagrams, are graphical representations of how a potential threat to a computer system might be exploited. Similar, independent work conducted by the NSA and DARPA employed a structured graphical representation of how specific attacks might be carried out, resulting in the so-called “attack tree.”

Bruce Schneier wrote a paper4 in 1998, “Toward a Secure System Engineering Methodology,” in which he utilized the attack tree creation for cyber-risk analysis, which proved to be a seminal contribution to the evolution of threat modeling methodologies. Schneier represented the goal of the attack as a root node and the means toward realizing this aim as leaf nodes. In this way, security professionals could systematically consider various attack vectors against any particular target.

Based on the work of Schneier, Loren Kohnfelder and Praerit Garg developed5 another threat modeling methodology, STRIDE, in 1999 to help Microsoft security professionals systematically analyze the potential attacks that potentially could be carried out within individual segments of a computer system. In very simple terms, the STRIDE method asks specific questions for each node of a Schneier attack tree – i.e. “At this juncture how can an attacker violate the authentication process?” Despite the significant contributions of Khnfelder and Garg, the industry’s attempt to apply STRIDE beyond its intended Windows-application limits had a slowing effect on the evolution of threat modeling toward a practical, commercially-applicable process.

In the same year that STRIDE was introduced, the Carnegie Mellon University’s Software Engineering Institute introduced the Operationally Critical Threat, Asset, and Vulnerability Evaluation OCTAVE6 method. This was the first risk-based assessment methodology for IT security that sought to balance considered both the technological and the organizational aspects of potential threats with the practicalities of security practices. The primary focus of OCTAVE is managing organizational risk.

Frank Swiderski and Window Snyder, in 2004, wrote the first book7 – “Threat Modeling” – published by Microsoft Press, that developed the idea of utilizing threat modeling to write secure applications proactively. That same year Microsoft introduced the Threat Analysis & Modeling (TAM) tool designed to allow non-security experts to enter known information, including business requirements and application specifications, to study potential threats to the application under development. The tool utilized data flow diagrams (DFD) and Schneier-style attack trees to help development teams think through potential attacks and the corresponding security measures to be implemented proactively.

Commercial Evolution of Threat Modeling for IT

DFD-based threat modeling approaches continued as the mainstay among security subject matter experts for the next seven years, but there was little adoption of the method within the development community or other non-security professionals, especially since development teams increasingly adopted the Agile development methodology.

In 2011 ThreatModeler pushed the evolution of threat modeling toward automated threat analysis with the Centralized Threat Library and the Intelligent Threat Engine, thereby paving the way for the development for threat modeling that could integrate with developer’s Agile production environment.

In the same year, ThreatModeler introduced the concept of the process flow diagram (PFD). Unlike the traditional DFDs, which represent how an application manipulates data at various junctures within a computer system, PFDs graphically demonstrate how a user moves between features of an application. PFDs create an application “map” in much the same way a developer – or a potential attacker – thinks about an application.

The following year ThreatModeler developed the concept that application threat modeling and operational threat modeling as separate functions. Application threat modeling helps development teams proactively integrate security requirements in their initial coding in an Agile development environment. Quite separately, operational threat modeling analyzes the end-to-end data flow through an organization’s infrastructure, allowing organizations to understand the “big picture” of their organizational risk profile.

In 2013 ThreatModeler produced the first commercial, automated, enterprise threat modeling tool. It was built around the Visual, Agile, and Simple Threat modeling methodology (VAST) developed by Anurag “Archie” Agarwal, the Chief Technical Architect of ThreatModeler.

The Continuing Evolution of Threat Modeling

Enterprises today seek to proactively and more effectively mitigate their application-level and organizational-level risk profiles. Increasingly they depend on vendor-initiated developments to push the technological boundaries and provide actionable modeling outputs. Presently, organizations embracing the cutting-edge of threat modeling can:

- Intelligently identify, in real-time, relevant threats within a contextualized risk;

- Prioritize and collaborate enterprise-wide on risk-mitigation efforts;

- Create organizational-wide threat portfolio reports;

- Continuously reduce the cost of vulnerability remediation even as they minimize their risk exposure.

As commercialized threat modeling continues toward becoming mainstream, new advancements in intelligent, automated threat identification and proactive remediation following the VAST modeling methodology will continue to define the cutting edge of cybersecurity.

Want to test drive the Industry’s #1 Automated Threat Modeling Platform that’s leading today’s evolution of threat modeling?

Request a demo with a ThreatModeler Expert today!

Reference:

1 https://www.wired.com/2012/01/computer-password/

2 https://news.asis.io/sites/default/files/Threat%20Modeling.pdf

3 http://dl.acm.org/citation.cfm?id=179237

4 https://www.schneier.com/academic/paperfiles/paper-secure-methodology.pdf

5 https://www.scribd.com/document/203743901/Shostack-ModSec08-Experiences-Threat-Modeling-at-Microsoft

6 http://www.itgovernanceusa.com/files/Octave.pdf

7 https://www.amazon.com/Threat-Modeling-Microsoft-Professional-Swiderski/dp/0735619913